Piotr Mirowski and Yann LeCun

European Conference on Machine Learning (ECML), 2009

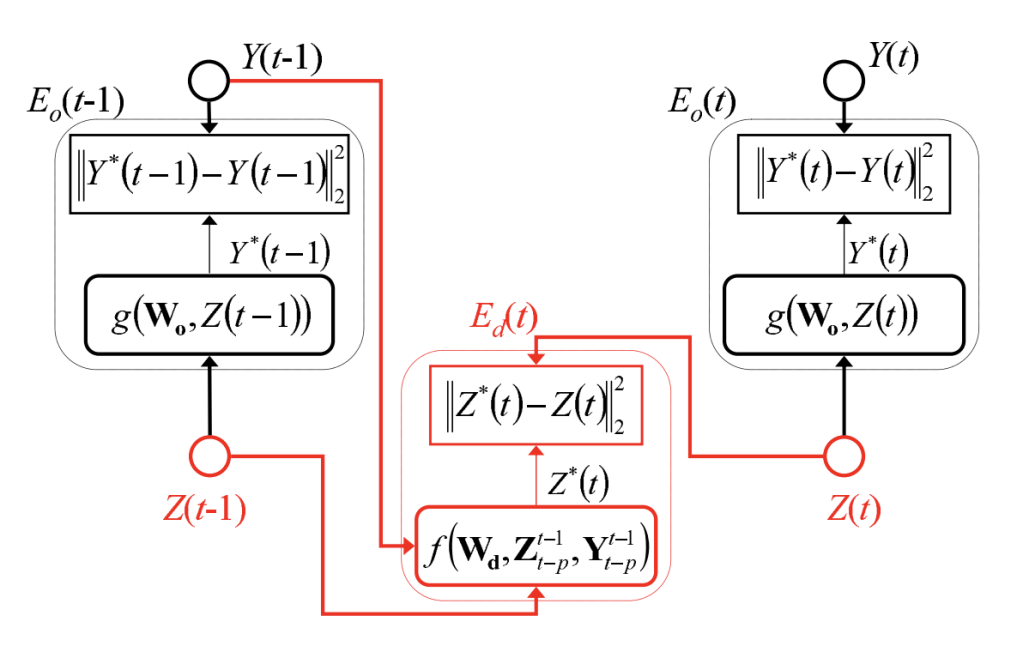

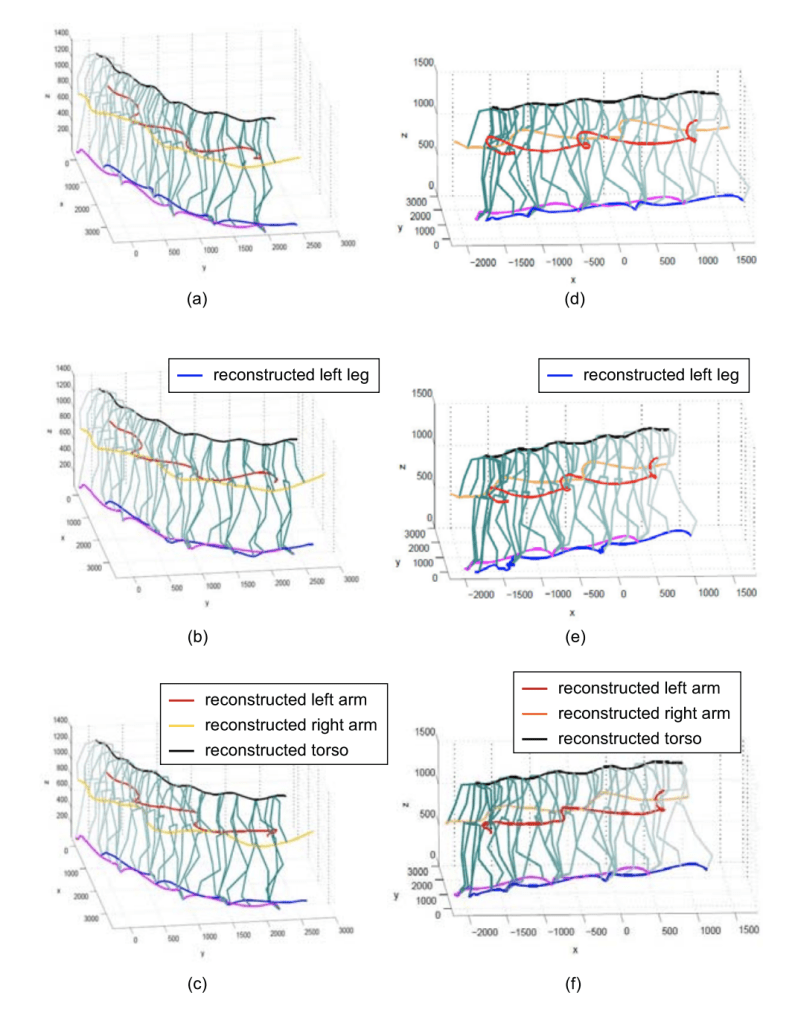

This article presents a method for training Dynamic Factor Graphs (DFG) with continuous latent state variables. A DFG includes factors modeling joint probabilities between hidden and observed variables, and factors modeling dynamical constraints on hidden variables. The DFG assigns a scalar energy to each configuration of hidden and observed variables. A gradient-based inference procedure finds the minimum-energy state sequence for a given observation sequence. Because the factors are designed to ensure a constant partition function, they can be trained by minimizing the expected energy over training sequences with respect to the factors’ parameters. These alternated inference and parameter updates can be seen as a deterministic EM-like procedure. Using smoothing regularizers, DFGs are shown to reconstruct chaotic attractors and to separate a mixture of independent oscillatory sources perfectly. DFGs outperform the best known algorithm on the CATS competition benchmark for time series prediction. DFGs also successfully reconstruct missing motion capture data.

capture marker data.

Paper link: Springer.